Let's Connect

Contact us

Thank you for reaching out, we will be in touch shortly!

Oops! Something went wrong while submitting the form.

By Shalom Goffri, PhD, Vice President of Valuation & Portfolio Management, Ascend Analytics

As hyperscalers and data center developers race to meet surging AI-driven demand, speed to market is paramount – which means site selection can make or break a project.

Finding the right site requires being able to quickly and accurately evaluate a series of complex and interconnected factors. Developers must be able to integrate transmission network modeling, nodal energy price forecasting, and fundamental-based production cost dispatch simulation into a single workflow, all while simultaneously evaluating interconnection feasibility, long-term energy economics, power deliverability, and flexible load potential.

In this context, leveraging a partner that offers sophisticated siting analytics software and expertise can provide immense competitive advantages to accelerate speed to market, as well as minimize costs and risks.

The following six factors are also vital for optimizing data center siting decisions.

While this question may seem extremely basic, answering it adequately can be very complex. Why? Specific location matters greatly in a siting analysis. Substation headroom, which is the available capacity to interconnect load without triggering costly network upgrades, can vary significantly from site to site.

Even though sites that require extensive upgrades may be technically feasible, multiple factors can render projects commercially or socially unviable. These factors include:

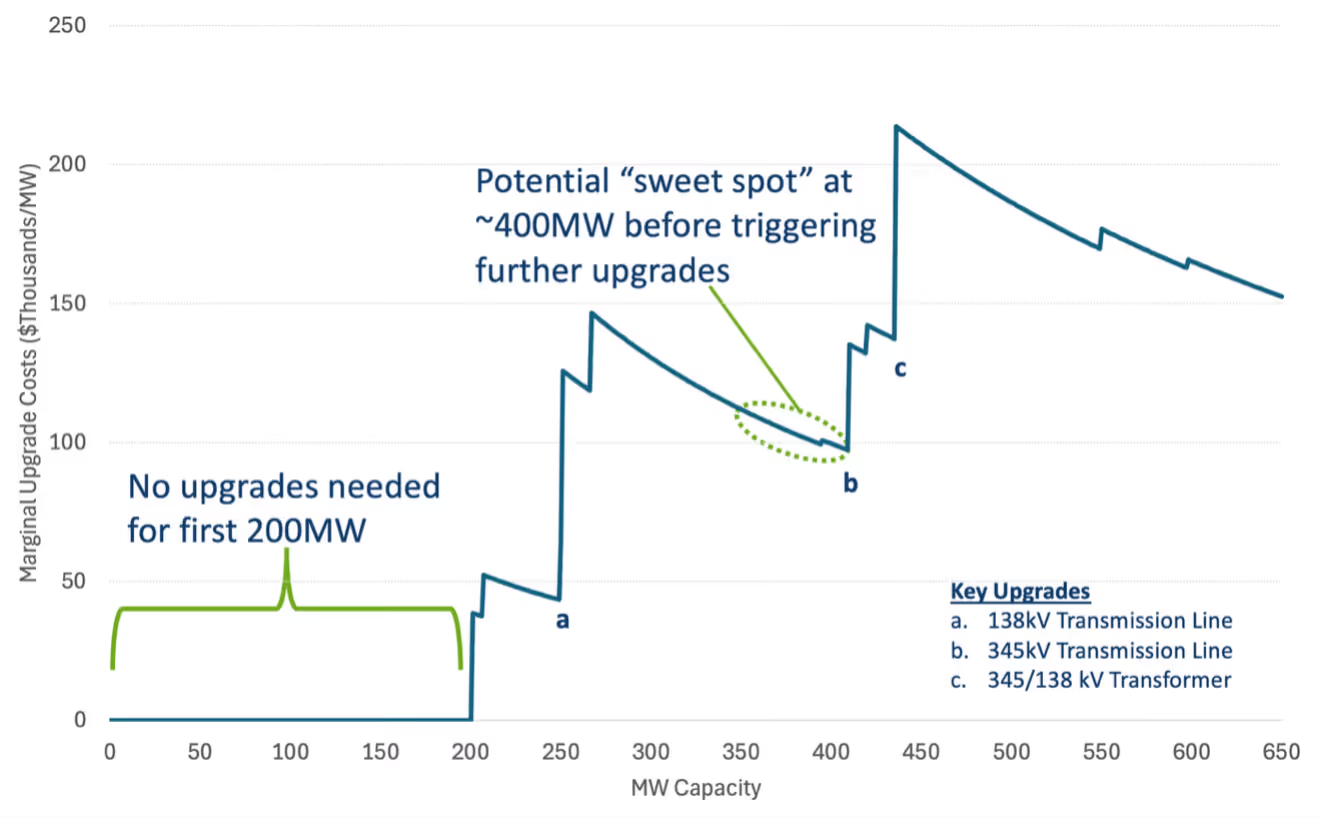

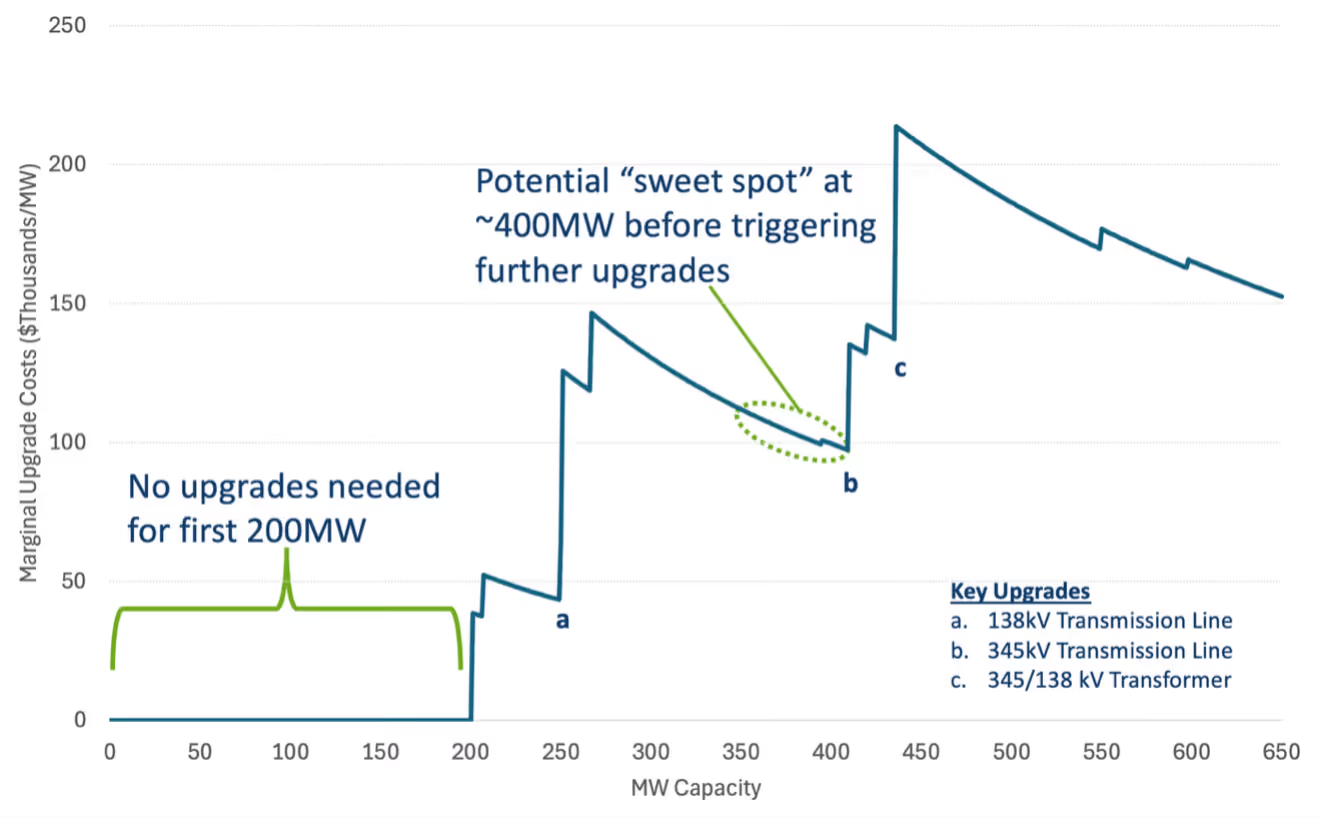

It is critical, then, for data center developers to have a granular view of substation-level headroom in order to understand the range of potential infrastructure upgrade costs and where load can be accommodated with minimal system changes, as illustrated in Figure 1.

The ability to interconnect is different than having access to reliable power delivery. A site can be technically connected to the grid and still face meaningful constraints on how reliably power reaches the facility.

For instance, a site located in a heavily congested area may find that, during hours when demand is highest and power is most expensive, deliverability is constrained. Integrating a power flow analysis helps developers understand the expected frequency, duration, and severity of such events, and then model them under scenarios that account for the new load’s impact on local grid dynamics.

Advanced modeling can also help data centers understand weather-correlated reliability risks, and how to best to mitigate those risks. For example, data center reliability evaluations often focus on summer peaks, but winter conditions are increasingly posing the greatest risks to grid reliability and present operational challenges for both renewable and thermal asset types.

Clearly understanding power deliverability is essential for realistically assessing both speed to market and operational risk. It also allows developers to create curtailment coverage strategies, which may include leveraging behind-the-meter generation or storage to create flexible load, that are determined by the grid’s actual behavior at a given node rather than a generalized rule of thumb.

Finding locations where the grid can absorb load without costly, time-consuming upgrades is far from easy, and requires a view that goes beyond available land, interconnection availability, and cost-to-connect.

In this context, a sophisticated siting analysis becomes a competitive advantage. Being able to quickly understand how – and by how much – congestion and/or curtailment events may limit power availability at a given node allows developers to clearly assess whether flexible load strategies such as behind-the-meter (BTM) storage and bring-your-own-capacity (BYOC) arrangements could enable interconnection at locations that would otherwise require extensive infrastructure work.

Location-specific power flow modeling that accounts for the presence of new load is also essential in helping to identify sites where flexible interconnection is genuinely feasible.

Flexible load strategies can produce benefits that go far beyond optimizing site operations. These include:

The first, and perhaps most obvious, benefit of flexible load is that it can increase grid utilization. A transmission system that would otherwise need to be upgraded to handle a single peak event that occurs during a handful of hours per year can instead be served by a data center that draws on storage during those periods. This allows grid assets to be more fully utilized, thus spreading fixed infrastructure costs across more consumption, decreasing customer rates.

This is not a marginal consideration. In many markets where data center development is most active, rate affordability has become a significant issue. Community opposition and regulatory resistance to new large-load interconnections are increasingly common, driven in part by the perception that industrial-scale power demand raises electricity costs for residential and small commercial customers who have no stake in the upside.

A data center designed around flexible load strategies can credibly make the case that it is increasing grid utilization rather than simply claiming scarce headroom, and that its presence supports rather than undermines affordability for surrounding communities. In markets where permitting, utility negotiations, or public acceptance are genuine bottlenecks, flexible load becomes a meaningful competitive and political asset.

While interconnection and speed are important early-stage siting factors, long-term energy costs remain one of the most consequential – and often underweighted – factors in site selection, though it is likely to become increasingly important as the industry gets more competitive. Data centers have operating horizons measured in decades. Over that timeframe, differences in locational energy prices can translate into substantial cost disparities.

As illustrated in Figure 2, a 500 MW load sited at a P90 energy cost node might expect to pay over $50 million more per year in energy costs than the same load at a P10 node. Over a 20-year asset life, that differential compounds to over $1 billion in additional costs. As new load is added, these dynamics can shift further, meaning that a site’s cost profile is influenced by both current conditions and future development.

Though some market participants continue to minimize the importance of energy costs, that view is beginning to change. For a developer selling capacity to a hyperscaler, offering a structurally lower cost of operation is a genuine competitive differentiator. For a hyperscaler making long-term infrastructure commitments, the case for energy cost optimization is straightforward and will grow in importance in a competitive environment.

During the siting process, then, it is vital to leverage a credible long-term energy price forecast that accounts for how nodal prices respond to load changes, evolving grid profiles, generation mix changes, and policy shifts. Understanding how a specific site’s energy cost profile is likely to evolve over a 30-year horizon requires detailed power market modeling, rather than a static benchmark or regional average.

For developers looking to move faster than backlogged interconnection queues allow, the idea of fully, or partially, off-grid data centers powered by on-site, behind-the-meter, natural gas generation has become increasingly popular. While this approach is both understandable and feasible, the trade-offs can be significant. These include:

Ultimately, the most effective approaches to data center siting leverage a unified software platform that integrates granular geospatial insights with a workflow that allows for the simultaneous optimization of available land, interconnection capability, and long-term energy costs. Taking this approach allows developers to move beyond basic site selection approaches and toward a unified siting strategy capable of quickly identifying locations that are optimal in terms of speed to market, location, and cost.

By Shalom Goffri, PhD, Vice President of Valuation & Portfolio Management, Ascend Analytics

As hyperscalers and data center developers race to meet surging AI-driven demand, speed to market is paramount – which means site selection can make or break a project.

Finding the right site requires being able to quickly and accurately evaluate a series of complex and interconnected factors. Developers must be able to integrate transmission network modeling, nodal energy price forecasting, and fundamental-based production cost dispatch simulation into a single workflow, all while simultaneously evaluating interconnection feasibility, long-term energy economics, power deliverability, and flexible load potential.

In this context, leveraging a partner that offers sophisticated siting analytics software and expertise can provide immense competitive advantages to accelerate speed to market, as well as minimize costs and risks.

The following six factors are also vital for optimizing data center siting decisions.

While this question may seem extremely basic, answering it adequately can be very complex. Why? Specific location matters greatly in a siting analysis. Substation headroom, which is the available capacity to interconnect load without triggering costly network upgrades, can vary significantly from site to site.

Even though sites that require extensive upgrades may be technically feasible, multiple factors can render projects commercially or socially unviable. These factors include:

It is critical, then, for data center developers to have a granular view of substation-level headroom in order to understand the range of potential infrastructure upgrade costs and where load can be accommodated with minimal system changes, as illustrated in Figure 1.

The ability to interconnect is different than having access to reliable power delivery. A site can be technically connected to the grid and still face meaningful constraints on how reliably power reaches the facility.

For instance, a site located in a heavily congested area may find that, during hours when demand is highest and power is most expensive, deliverability is constrained. Integrating a power flow analysis helps developers understand the expected frequency, duration, and severity of such events, and then model them under scenarios that account for the new load’s impact on local grid dynamics.

Advanced modeling can also help data centers understand weather-correlated reliability risks, and how to best to mitigate those risks. For example, data center reliability evaluations often focus on summer peaks, but winter conditions are increasingly posing the greatest risks to grid reliability and present operational challenges for both renewable and thermal asset types.

Clearly understanding power deliverability is essential for realistically assessing both speed to market and operational risk. It also allows developers to create curtailment coverage strategies, which may include leveraging behind-the-meter generation or storage to create flexible load, that are determined by the grid’s actual behavior at a given node rather than a generalized rule of thumb.

Finding locations where the grid can absorb load without costly, time-consuming upgrades is far from easy, and requires a view that goes beyond available land, interconnection availability, and cost-to-connect.

In this context, a sophisticated siting analysis becomes a competitive advantage. Being able to quickly understand how – and by how much – congestion and/or curtailment events may limit power availability at a given node allows developers to clearly assess whether flexible load strategies such as behind-the-meter (BTM) storage and bring-your-own-capacity (BYOC) arrangements could enable interconnection at locations that would otherwise require extensive infrastructure work.

Location-specific power flow modeling that accounts for the presence of new load is also essential in helping to identify sites where flexible interconnection is genuinely feasible.

Flexible load strategies can produce benefits that go far beyond optimizing site operations. These include:

The first, and perhaps most obvious, benefit of flexible load is that it can increase grid utilization. A transmission system that would otherwise need to be upgraded to handle a single peak event that occurs during a handful of hours per year can instead be served by a data center that draws on storage during those periods. This allows grid assets to be more fully utilized, thus spreading fixed infrastructure costs across more consumption, decreasing customer rates.

This is not a marginal consideration. In many markets where data center development is most active, rate affordability has become a significant issue. Community opposition and regulatory resistance to new large-load interconnections are increasingly common, driven in part by the perception that industrial-scale power demand raises electricity costs for residential and small commercial customers who have no stake in the upside.

A data center designed around flexible load strategies can credibly make the case that it is increasing grid utilization rather than simply claiming scarce headroom, and that its presence supports rather than undermines affordability for surrounding communities. In markets where permitting, utility negotiations, or public acceptance are genuine bottlenecks, flexible load becomes a meaningful competitive and political asset.

While interconnection and speed are important early-stage siting factors, long-term energy costs remain one of the most consequential – and often underweighted – factors in site selection, though it is likely to become increasingly important as the industry gets more competitive. Data centers have operating horizons measured in decades. Over that timeframe, differences in locational energy prices can translate into substantial cost disparities.

As illustrated in Figure 2, a 500 MW load sited at a P90 energy cost node might expect to pay over $50 million more per year in energy costs than the same load at a P10 node. Over a 20-year asset life, that differential compounds to over $1 billion in additional costs. As new load is added, these dynamics can shift further, meaning that a site’s cost profile is influenced by both current conditions and future development.

Though some market participants continue to minimize the importance of energy costs, that view is beginning to change. For a developer selling capacity to a hyperscaler, offering a structurally lower cost of operation is a genuine competitive differentiator. For a hyperscaler making long-term infrastructure commitments, the case for energy cost optimization is straightforward and will grow in importance in a competitive environment.

During the siting process, then, it is vital to leverage a credible long-term energy price forecast that accounts for how nodal prices respond to load changes, evolving grid profiles, generation mix changes, and policy shifts. Understanding how a specific site’s energy cost profile is likely to evolve over a 30-year horizon requires detailed power market modeling, rather than a static benchmark or regional average.

For developers looking to move faster than backlogged interconnection queues allow, the idea of fully, or partially, off-grid data centers powered by on-site, behind-the-meter, natural gas generation has become increasingly popular. While this approach is both understandable and feasible, the trade-offs can be significant. These include:

Ultimately, the most effective approaches to data center siting leverage a unified software platform that integrates granular geospatial insights with a workflow that allows for the simultaneous optimization of available land, interconnection capability, and long-term energy costs. Taking this approach allows developers to move beyond basic site selection approaches and toward a unified siting strategy capable of quickly identifying locations that are optimal in terms of speed to market, location, and cost.

Ascend Analytics is the leading provider of market intelligence and analytics solutions for the power industry.

The company’s offerings enable decision makers in power development and supply procurement to maximize the value of planning, operating, and managing risk for renewable, storage, and other assets. From real-time to 30-year horizons, their forecasts and insights are at the foundation of over $50 billion in project financing assessments.

Ascend provides energy market stakeholders with the clarity and confidence to successfully navigate the rapidly shifting energy landscape.