Let's Connect

Contact us

Thank you for reaching out, we will be in touch shortly!

Oops! Something went wrong while submitting the form.

As load growth soars in U.S. energy markets, data center site selection has become far more than a real estate and fiber routing decision for developers and hyperscalers. With interconnection queues worsening and nodal price differences across the country widening, data center siting has become a transmission access and long-term economics decision, with slim margins for error. In the race to secure power quickly, site selection without rigorous transmission modeling and nodal energy cost analysis represents both a significant market risk as well as a material financial risk.

In a recent Ascend Analytics webinar on transmission and market modeling for data center and power project siting, Robert LaFaso, Director of Forecasting and Valuation, Dr. Michael Fisher, Managing Director of Evaluation Services, and Dr. Shalom Goffri, VP of Valuation and Portfolio Management, discussed critical considerations for siting decisions, including initial interconnection screening, dynamic power flow modeling, and flexible load design.

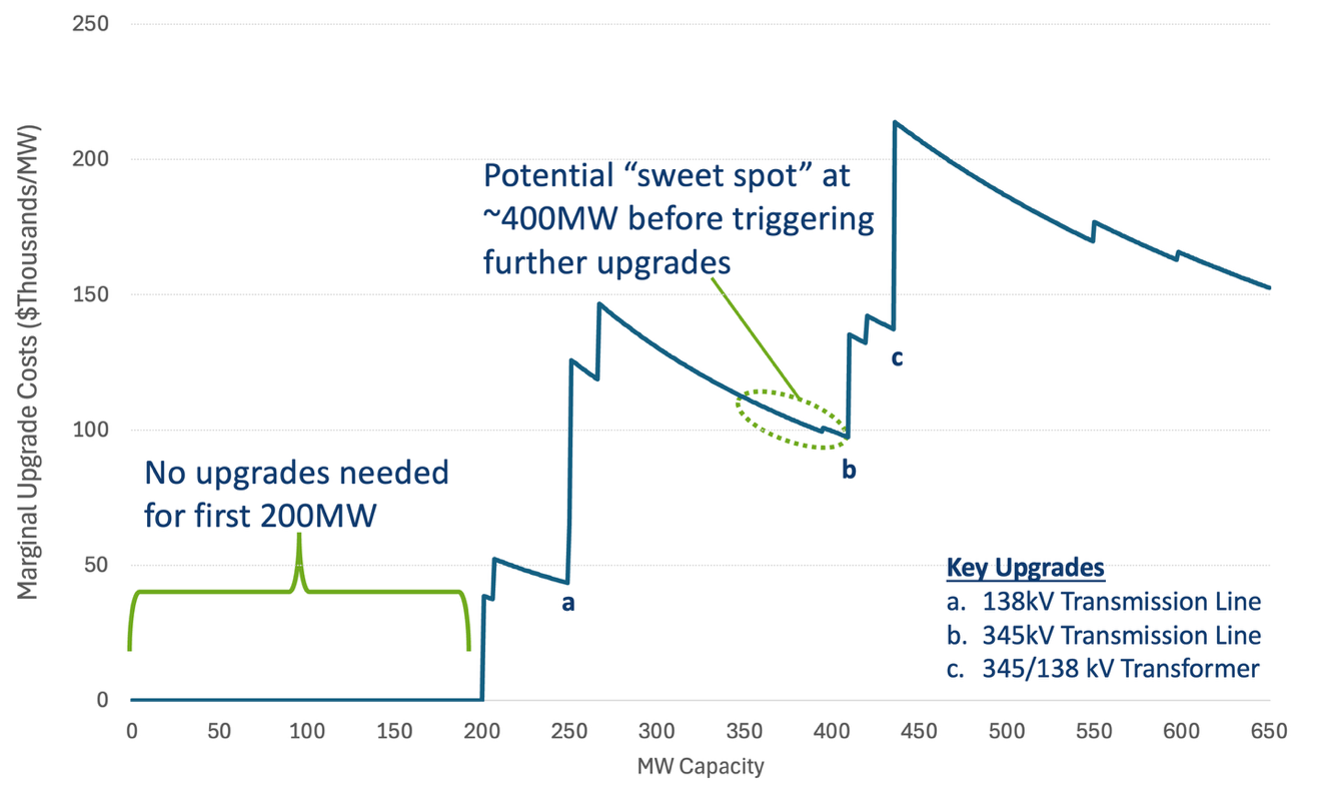

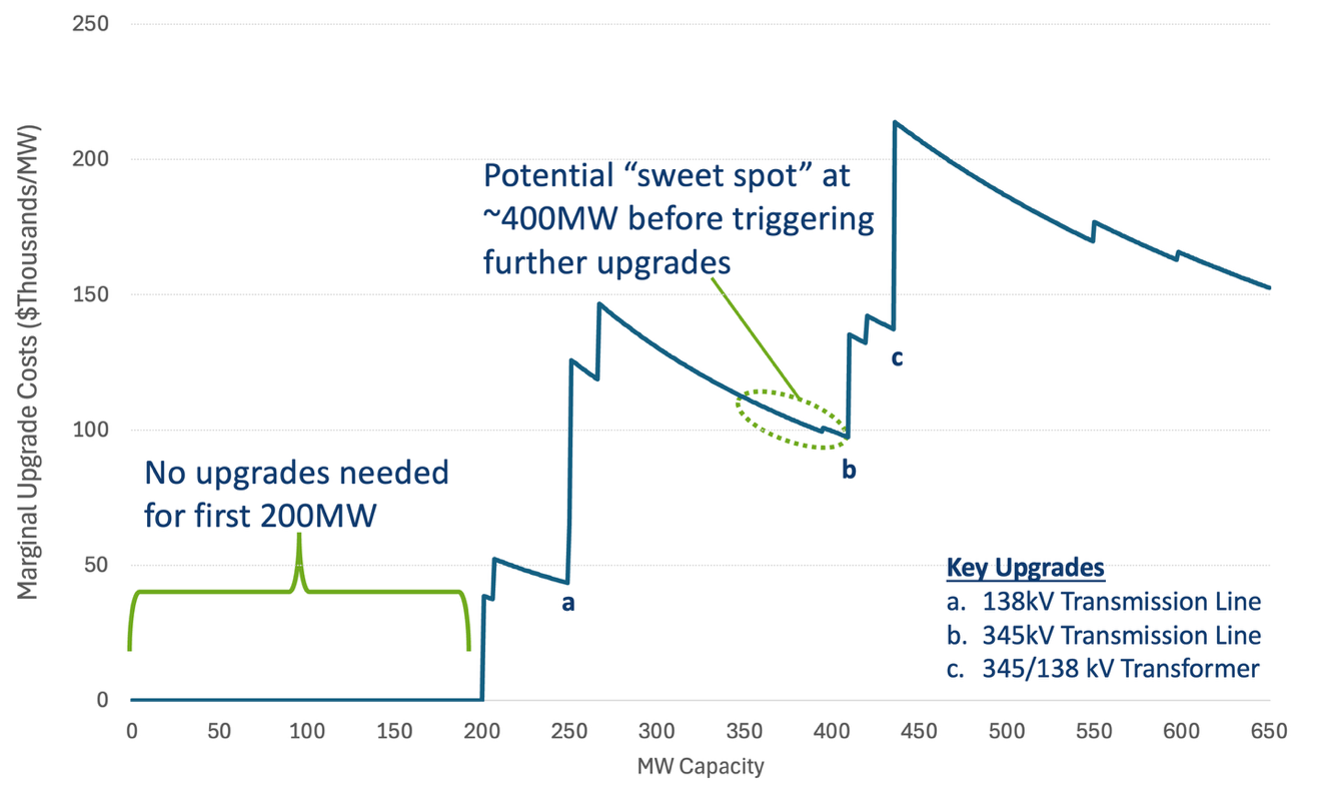

Standard siting analyses screen for headroom availability and cost to connect, measured as total network upgrade cost. While both are necessary inputs, treating upgrade cost as a single number obscures a more granular and actionable insight: upgrade costs are not linear. Upgrade costs increase in discrete steps as successive infrastructure improvements are triggered, and the impact of those steps can be significant. As illustrated in Figure 1, a developer who opts for a 400 MW project instead of 450 MW avoids more than $50 million in network upgrades, as well as the multi-year supply chain timelines that come with large transformer procurement.

This means that sizing decisions must be made in direct reference to substation-specific upgrade cost curves, not general rules of thumb about project scale. Understanding where thresholds are, what triggers them, and any related supply chain implications is foundational for ensuring both speed to power and economic value.

Static nodal energy cost analysis, which examines historical or forecasted prices at a given node as if new large loads are entering the market at generic locations instead of specific substations of interest, fails to adequately account for what happens when a large load actually withdraws power from that node. For example, adding 500 MW of load to a substation changes the local supply-demand balance, and that change is reflected in nodal prices. For developers, understanding the magnitude of that effect requires understanding how much headroom a substation has relative to the size of the new load. Similar to the sizing idea previously discussed, this impact will also be highly non-linear and energy costs will often move stepwise as more expensive units are required to serve growing loads.

Dynamic price formation analysis can help developers gain a clearer understanding of the impact of projected load on a specific node. As illustrated in Figure 2, the same load at different substations produces very different energy cost outcomes. At a site with 570 MW of headroom absorbing a 500 MW data center load, peak prices during evening hours rise to roughly $80/MWh. At a site with only 22 MW of stress-case headroom serving the same 500 MW load, peak prices spike to $210/MWh during congested and curtailed hours, because the transmission system cannot efficiently deliver the power required; the resulting congestion drives locational marginal prices sharply higher.

Dynamic price formation analysis also reveals insights about the potential value of flexible interconnection. When a site has limited headroom and a data center itself is driving congestion, the value of BTM storage goes up: beyond covering curtailment events that might have occurred anyway, the storage is actively preventing the extreme price spikes that the data center's presence might create. Thus, BTM storage becomes both a reliability asset and a hedge against self-induced energy cost inflation. Both the 22 MW headroom substation and the 570 MW headroom substation can accommodate a 500 MW load without increasing prices, if they are able to either flex down consumption or island for 4-8 hours during summer evenings.

There is no one-size-fits-all approach when it comes to flexible interconnection strategies, which depend greatly on a site's specific curtailment profile. The amount of BTM storage or on-site generation required depends on three site-specific factors: the number of curtailment events per year, the duration of each event, and how many MW are curtailed during each event. A site with infrequent, shallow curtailments may need only modest BTM resources. A site with frequent, deep curtailments during a concentrated window, such as during summer afternoons in heat-stressed markets, may require a substantially larger resource.

Transmission analysis and site-level modeling can help data centers design cost-effective solutions that meet availability, reliability, and on-site dispatch criteria, while opening additional viable sites. For example, Ascend used a flexible interconnection framework to analyze a Dallas-area substation with 300 MW of existing headroom, where a 1 GW data center under standard interconnection rules would require roughly $40-$50 million in upgrades and multi-year transformer procurement. By deploying a 100 MW 4-hour BTM BESS, the load could access the site without triggering upgrades, and could reliably cover any curtailment- or congestion-related event. This takes advantage of the historically conservative planning rules around transmission space that look at extreme event planning conditions. In more typical loading, the load only needs a much smaller 100 MW BESS to manage substation constraints. While the investment in a BTM BESS in this case could be significant, especially if the load wanted to see high reliability during critical peak events, it would also ensure far greater speed to market by avoiding upgrades (and upgrade costs) as well as sidestepping supply chain constraints. A BTM BESS would also create a potential revenue-generating asset for the vast majority of hours when not needed to ensure reliability by positioning the load as a demand-side market resource.

The full webinar recording offers additional insights related to transmission modeling, nodal energy cost analysis, flexible interconnection design, and data center and power project siting across ERCOT and other major ISOs.

Trusted across hundreds of deals and more than $1 billion in transaction value, PowerVAL provides bankable revenue forecasts and nodal-specific valuations for storage, renewables, and hybrid projects under multiple operating strategies across all ISOs. Please contact us to learn more.

As load growth soars in U.S. energy markets, data center site selection has become far more than a real estate and fiber routing decision for developers and hyperscalers. With interconnection queues worsening and nodal price differences across the country widening, data center siting has become a transmission access and long-term economics decision, with slim margins for error. In the race to secure power quickly, site selection without rigorous transmission modeling and nodal energy cost analysis represents both a significant market risk as well as a material financial risk.

In a recent Ascend Analytics webinar on transmission and market modeling for data center and power project siting, Robert LaFaso, Director of Forecasting and Valuation, Dr. Michael Fisher, Managing Director of Evaluation Services, and Dr. Shalom Goffri, VP of Valuation and Portfolio Management, discussed critical considerations for siting decisions, including initial interconnection screening, dynamic power flow modeling, and flexible load design.

Standard siting analyses screen for headroom availability and cost to connect, measured as total network upgrade cost. While both are necessary inputs, treating upgrade cost as a single number obscures a more granular and actionable insight: upgrade costs are not linear. Upgrade costs increase in discrete steps as successive infrastructure improvements are triggered, and the impact of those steps can be significant. As illustrated in Figure 1, a developer who opts for a 400 MW project instead of 450 MW avoids more than $50 million in network upgrades, as well as the multi-year supply chain timelines that come with large transformer procurement.

This means that sizing decisions must be made in direct reference to substation-specific upgrade cost curves, not general rules of thumb about project scale. Understanding where thresholds are, what triggers them, and any related supply chain implications is foundational for ensuring both speed to power and economic value.

Static nodal energy cost analysis, which examines historical or forecasted prices at a given node as if new large loads are entering the market at generic locations instead of specific substations of interest, fails to adequately account for what happens when a large load actually withdraws power from that node. For example, adding 500 MW of load to a substation changes the local supply-demand balance, and that change is reflected in nodal prices. For developers, understanding the magnitude of that effect requires understanding how much headroom a substation has relative to the size of the new load. Similar to the sizing idea previously discussed, this impact will also be highly non-linear and energy costs will often move stepwise as more expensive units are required to serve growing loads.

Dynamic price formation analysis can help developers gain a clearer understanding of the impact of projected load on a specific node. As illustrated in Figure 2, the same load at different substations produces very different energy cost outcomes. At a site with 570 MW of headroom absorbing a 500 MW data center load, peak prices during evening hours rise to roughly $80/MWh. At a site with only 22 MW of stress-case headroom serving the same 500 MW load, peak prices spike to $210/MWh during congested and curtailed hours, because the transmission system cannot efficiently deliver the power required; the resulting congestion drives locational marginal prices sharply higher.

Dynamic price formation analysis also reveals insights about the potential value of flexible interconnection. When a site has limited headroom and a data center itself is driving congestion, the value of BTM storage goes up: beyond covering curtailment events that might have occurred anyway, the storage is actively preventing the extreme price spikes that the data center's presence might create. Thus, BTM storage becomes both a reliability asset and a hedge against self-induced energy cost inflation. Both the 22 MW headroom substation and the 570 MW headroom substation can accommodate a 500 MW load without increasing prices, if they are able to either flex down consumption or island for 4-8 hours during summer evenings.

There is no one-size-fits-all approach when it comes to flexible interconnection strategies, which depend greatly on a site's specific curtailment profile. The amount of BTM storage or on-site generation required depends on three site-specific factors: the number of curtailment events per year, the duration of each event, and how many MW are curtailed during each event. A site with infrequent, shallow curtailments may need only modest BTM resources. A site with frequent, deep curtailments during a concentrated window, such as during summer afternoons in heat-stressed markets, may require a substantially larger resource.

Transmission analysis and site-level modeling can help data centers design cost-effective solutions that meet availability, reliability, and on-site dispatch criteria, while opening additional viable sites. For example, Ascend used a flexible interconnection framework to analyze a Dallas-area substation with 300 MW of existing headroom, where a 1 GW data center under standard interconnection rules would require roughly $40-$50 million in upgrades and multi-year transformer procurement. By deploying a 100 MW 4-hour BTM BESS, the load could access the site without triggering upgrades, and could reliably cover any curtailment- or congestion-related event. This takes advantage of the historically conservative planning rules around transmission space that look at extreme event planning conditions. In more typical loading, the load only needs a much smaller 100 MW BESS to manage substation constraints. While the investment in a BTM BESS in this case could be significant, especially if the load wanted to see high reliability during critical peak events, it would also ensure far greater speed to market by avoiding upgrades (and upgrade costs) as well as sidestepping supply chain constraints. A BTM BESS would also create a potential revenue-generating asset for the vast majority of hours when not needed to ensure reliability by positioning the load as a demand-side market resource.

The full webinar recording offers additional insights related to transmission modeling, nodal energy cost analysis, flexible interconnection design, and data center and power project siting across ERCOT and other major ISOs.

Trusted across hundreds of deals and more than $1 billion in transaction value, PowerVAL provides bankable revenue forecasts and nodal-specific valuations for storage, renewables, and hybrid projects under multiple operating strategies across all ISOs. Please contact us to learn more.

Ascend Analytics is the leading provider of market intelligence and analytics solutions for the power industry.

The company’s offerings enable decision makers in power development and supply procurement to maximize the value of planning, operating, and managing risk for renewable, storage, and other assets. From real-time to 30-year horizons, their forecasts and insights are at the foundation of over $50 billion in project financing assessments.

Ascend provides energy market stakeholders with the clarity and confidence to successfully navigate the rapidly shifting energy landscape.